Tech is changing health care as we know it. But can it make it better?

Late one night in the not-so-distant future, a man stumbles into an emergency department with slowed breathing and slurred speech. A triage nurse scans his face with an iPhone camera and records an erratic pulse and low blood pressure. At bedside, a resident dons a Google Glass headset and examines the patient while sending real-time video to a remote toxicology consultant, who confirms an opioid overdose and recommends treatment. After he’s discharged, the patient responds to a few automated text messages checking on his recovery. He also agrees to participate in a treatment monitoring program, taking pills with ingestible biosensors that track medication adherence and wearing a slim wristband that can detect relapse, which his physician oversees from her office.

We may be a long way from the futuristic sickbay of the USS Enterprise, but all of these technologies exist, and they aim to streamline physician workflow, enhance patient engagement, cut wait times and health care costs, increase patient satisfaction, and, the true endgame, help them live longer, healthier lives. This intersection of tech and medicine, known collectively as digital health, is a small but growing field that’s already attracting deep-pocketed venture capitalists along with medical researchers, clinicians, and patients.

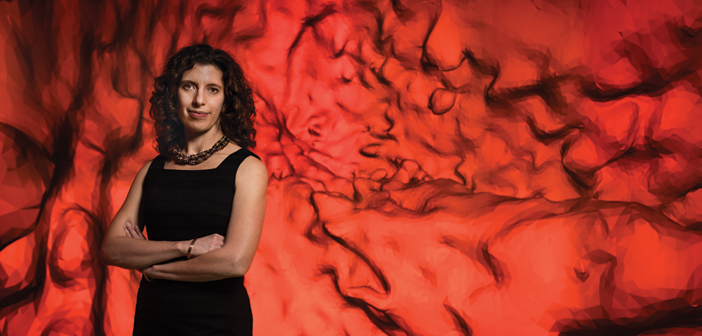

“Digital health is reinventing the whole enterprise” of health care, says Megan Ranney, MD RES’08 F’10 MPH’10, assistant professor of emergency medicine and founder of Brown’s Emergency Digital Health Innovation program. “It has the potential to transform the way we provide care and help our patients stay healthy.”

“When you look at the future of what a hospital looks like, it’s going to be fundamentally different from what it is right now,” says Edward Boyer, MD, PhD, a professor of emergency medicine at the University of Massachusetts Medical School and a frequent collaborator with Ranney and others at Brown. “What this line of research does is it blows the walls off the hospital.”

No one denies that health care in the United States is in dire need of tech’s two favorite buzzwords: disruption and innovation. “The way the health care system currently works is broken,” Ranney says. But researchers at Brown are cautious about VC and Silicon Valley’s attention. “You don’t want your grandmother taken care of by something disruptive that’s not been appropriately tested,” Leo Kobayashi ’94 MD’98, associate professor of emergency medicine, says. “You could hurt her.”

That’s why, Ranney says, it’s critical that clinicians as well as patients get in on the ground floor. “This is coming, whether we want to create it within medicine or it’s created from the outside,” Ranney says. “We”—physicians—“have a chance to keep it trustworthy and safe. Business isn’t going to do that. And frankly, government isn’t either.” Successful digital health tools must be tested in the real world, and security, ethical, and privacy issues must be considered. Effective development also depends on inclusiveness—of believers and nonbelievers, even of the technologically impaired. “I’m not a techie. I don’t know how to program,” Ranney says. “But you don’t have to, to work on this and enjoy it.”

I’m sad all the time

Nine out of 10 American teens own or have access to a cell phone; texting is far and away their favorite form of communication. Yet it surprises skeptics that automated text messaging programs can be effective health care interventions. How could a computer be as helpful as a real person?

“Teens don’t necessarily want to talk to a counselor,” says Cassandra Duarte MD’18, who is working with Ranney to study iDove, a text-based program for teenagers at risk for depression. “They would rather interact with their phone.”

And it’s not just kids. More than 90 percent of American adults have cell phones, and more than 80 percent of them text. “It’s the most ubiquitous, most stable method of communication,” Beth Bock, PhD, professor of psychiatry and human behavior and of behavioral and social sciences (research), says. Automated text messages—to remind patients to take their medication, check in after a hospitalization, or manage chronic diseases—have repeatedly proved effective and are a regular aspect of care in a few US health systems.

Bock started her career running smoking cessation support groups, and she quickly saw the utility of texting in her work. “It’s fascinating to take a face-to-face intervention and deliver it in little doses with a device they use anyway. We’re worming into people’s lives,” she says. Furthermore, text interventions are cheap, can be delivered any time of day or night, and can help many more patients. “Scaling up a text messaging program is not hard,” she says. “We could reach thousands of people.”

Even though users know messages are automated, Bock stresses they won’t work if they’re too robotic. Her smoking cessation program includes a month of messages to prepare a participant for his or her quit date (which users set themselves) and eight weeks of supportive and educational texts after, but it adapts to individual situations. People who don’t sign up until after they quit, for example, receive different messages. “And if they didn’t quit [on their selected date]and need to start over, the messaging is different,” Bock says. “We don’t want it to seem completely mindless.”

When trying to reach teens, it’s even more important to sound authentic, she says. For a pilot aimed at heavy-drinking college students, Bock and Rochelle Rosen AM’91 PhD’02, assistant professor of behavioral and social sciences, assembled student focus groups to help write the texts, which encourage safe drinking

behavior. “My concern was that text messages written by overeducated PhDs wouldn’t sound appropriate to the age group we were working with,” Bock says.

She was right. Though some of the automated messages, which are sent on Thursdays, Fridays, and Saturdays, are educational (“Never leave your drinks unattended,” “You don’t need to be wasted to have a good time”), others that students helped her develop have an authentic irreverence (“If the room is spinning, the alcohol is winning”). Bock says students advised them, “Don’t tell me how to drink, say you care about how I drink.”

Teens interviewed during the development of iDove, which targets 13- to 17-year-olds with depressive symptoms, echoed this. “[I]t would just be nice, you know, just to get a message, just sayin’ there’s people out there,” one boy told researchers. Faith Birnbaum ’10 MD’16, who also worked on the project, says, “Teens feel like someone’s there, even though they know it’s an automated program.”

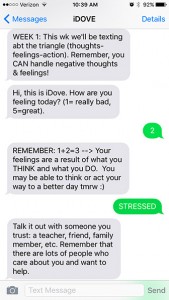

ON CALL: Automated texts from iDove may help troubled teens cope.

Participants in the iDove feasibility trial, which is ongoing, are recruited in the Hasbro Children’s Hospital emergency department. The intervention group learns some coping skills and takes home a packet with more information; then each day they receive the message, “Hi, this is iDove. How are you feeling today? (1= really bad, 5=great).” Based on the number texted back, the teen gets an automated response. For example, a 1 or 2 will prompt a reply that draws on their skills training, such as, “Remember, when something makes you feel bad, try to think of diff ways to approach the situation.” Users also can write back a word like “sad” or “stressed,” which triggers further automated responses.

iDove enrollees are cautioned that no one is monitoring the texts in real time, Ranney says. Unexpected words or numbers prompt a message advising the user to call a crisis hotline or 911 for immediate help. “We also check the text messages daily for signs of worsening mood or other potentially dangerous messages,” she says. “I have only had to call participants one or two times over more than 130 patients’ enrollment.”

Texting lowers the bar of entry to mental health care, which can carry a negative stigma and be costly or difficult to access besides. Crisis Text Line, founded in 2013 by Nancy Lublin ’93, is a free, nationwide service that connects texters to live counselors. According to anonymized data compiled from the more than 12 million messages exchanged so far, many texters reach out from school—a bathroom stall, or even the cafeteria, where they’re surrounded by people. Bob Filbin, CTL’s chief data scientist, says a text feels more private and anonymous than a phone call. One teen told him, “I like the idea of texting because I wouldn’t want the person on the other end of the phone to hear me crying.”

When people contact CTL, they get an automated reply asking what’s going on. “It’s amazing how quickly they respond with really intense messages,” Filbin says, like “I think I might b pregnant” or “I’m worried I might cut again.” They’re connected with volunteer crisis counselors, who exchange dozens of messages that employ “active listening” and collaborative problem solving, and if necessary refer texters to services. “It turns out most people in crisis just need somebody to talk to,” Filbin says.

Sense and sensibility

Even the most gung-ho digital health advocates stress that texting and other tools are intended to enhance, not replace, the face-to-face clinical relationship. Text messages open the door to services a patient doesn’t have the means to get and a physician doesn’t have time to provide, or allow a doctor to reach more patients, or let a patient play a more active role in his or her own care. As practices become ever busier, texting may actually help clinicians and patients stay more connected. After all, Duarte says, “How big a difference can a 10- to 12-minute encounter with a physician really make?”

Especially in chronic disease management, it can seem like a doctor’s work is never done. Medication adherence is a frequent challenge, but self-reporting is notoriously unreliable, and a text reminder to take a pill doesn’t guarantee the patient will follow through. Even techie tools like “smart” pill bottles that record when the cap is opened offer only indirect evidence. The most reliable technique, direct observation, is expensive and impractical in most cases. It’s a ripe opportunity, says Peter Chai ’06 MMS’07 MD’10 RES’14, for a digital health solution.

Chai, a toxicology fellow at UMass Medical, is working with Ed Boyer, his mentor and the director of toxicology, to study ingestible biosensors—tiny tags attached to gelatin capsules that, when dissolved in the stomach, send a radio signal that’s picked up by a device worn by the patient. “The cool part is,” Chai says, “not only do we know in real time if they took the medication, but we can respond in real time.” The wearable device sends a signal to a HIPAA-compliant, cloud-based server, which the clinician can access and, if needed, send a text reminder or reinforcing message.

Chai and Boyer first tested the technology to monitor HIV medication adherence, but as toxicologists they see applications beyond disease management. “We’re giving this to patients with extremity fractures who are prescribed opioids—some of the most dangerous medications we have in medicine,” Chai says. “But our instructions are to take as needed, so we are putting the onus of a very dangerous medication on our patient.” Ingestible biosensors could tell physicians how patients interpret “as needed”: if they’re taking fewer pills than expected, doctors can prescribe less; “if they’re taking more, we should be careful not to get people hooked,” he says.

Boyer has been working with biosensors for nearly a decade, when he began developing wearables with the MIT Media Lab. As a drug abuse researcher, he wants to find ways to “be there” for his patients at all times. “You would not necessarily want to rely upon a face-to-face clinical relationship if you have a condition or a medical problem that can raise its head at any time,” such as drug cravings or relapse, he says. After years

of tinkering his wearable biosensors have evolved into unobtrusive wristbands, which Stephanie Carreiro, MD RES’13, a toxicologist and assistant professor at UMass, is using to identify relapse in cocaine- and opioid-addicted patients.

The wristband measures skin temperature and conductance, motion, and heart rate with small electrodes, Carreiro says, and streams the data wirelessly to a clinician. A drop in skin temperature, increase in skin conductance, and “hyperkinetic” activity suggest cocaine use, she says: “that constellation of findings is pretty dramatic.” Studies with cocaine users have been fairly successful so far, she says, and she’s completed a small pilot with opioid users, who exhibit nearly opposite symptoms.

“The appeal of using something more advanced is we can monitor [patients] continuously in real time,” Carreiro says. “There’s no way a urine or blood test can do that.” Furthermore, in these days of activity trackers and smart watches, wearing a wristband to monitor health trends is familiar. Patients can even download an app to see their data. “Patients have been incredibly engaged,” Carreiro says. “It takes away

some of the foreignness and the hesitancy some patients have to normal medical interventions and brings it back to their level.”

She adds that patients are free to remove the bracelet at any time, “but a lot of our participants, especially the ones who are motivated, have left it on even when they relapse.” Ultimately Carreiro hopes to collect enough data to predict relapse, so the clinician can contact the patient via text message or through a sponsor to head it off.

The wristband may help clinicians not only as monitors but as users themselves. For example, because some studies have found a correlation between stress and empathetic decision making, “you can trick out residents to see who is feeling stressed in a medical encounter, to teach empathetic skills,” Boyer says. “The application of these things is very broad.”

New ways of seeing

Chai, who began working in biotech development as a grad student at Brown, was dismayed to realize how much medicine lagged behind everyday tech trends. “Someone was critically ill, we needed to urgently communicate, but we were using pagers—technology from the ’80s—sitting by the phone, waiting for a call back. It caused delays in care,” he says.

When Google introduced Glass, a hands-free, head-mounted display, in 2012, Chai saw immediate applications in the Rhode Island Hospital emergency department. His 2014 study, with Ranney and several other MDs at Alpert Medical School, was the first in the nation to use the device in the ED, to conduct remote dermatology consultations by securely streaming live video and images to a specialist, who would diagnose the condition and recommend treatment. “It takes six months to see a dermatologist, but if a patient was in the emergency room with a skin complaint, we could get an immediate consult,” Chai says.

At UMass, Chai, with Boyer, demonstrated that Google Glass also could work for live toxicology consults. He says they use it a few times a month, for difficult cases. Consultants typically log in at the office or from home, but “we can send a live video feed anywhere. They could be in Asia or Europe,” Chai says. He adds that though patients reported they’d rather see a physician in person, they preferred Glass to the typical phone consult.

Back in Providence, the dermatology study changed hospital culture, according to Susan Duffy, MPH ’81 MD’88, the medical director of the Hasbro Children’s Hospital ED. “Now [with patients’ permission]we all take pictures on our iPhones,” she says, and save them directly to their electronic medical records; the photos help to track physical changes, like the progress of a rash. EMRs can store video, too. “We tell families to take videos of abnormal movement or seizures at home and bring it with them,” Duffy says.

Smartphones are becoming as indispensable inside the hospital as they are outside. Many physicians already use them to quickly search for information about drugs, diseases, and the latest research. But they can do much more. Geoffrey Capraro, MD, MPH, assistant professor of emergency medicine, is trying out inexpensive apps and attachable devices to take vital signs and examine children’s ears. “It’s an example of iPhone-based technology that will change what we do, change patient expectations of what we do, maybe increase patient understanding of what’s going on, and allow better teaching,” he says.

Capraro, who studies pediatric sepsis, is collaborating with Leo Kobayashi to assess apps that detect vital signs remotely. “Vital signs are the bane of my existence because they are so challenging to capture in kids,” Capraro says. Children squirm, they cry, they’re afraid of cords. With the FLIR One, an infrared camera attachment, and an app that encodes each pixel with a temperature, a clinician may be able to simply scan a patient’s face to measure body surface temperature; with another app, he can see each heartbeat by using the phone’s own camera to detect subtle changes in facial coloration. “Eventually we imagine a patient in triage and with a camera system we look at the patient and get real-time vital signs,” Kobayashi says. “We may find out they need to be in critical care now.”

The accessibility of these tools means patients—or their parents—could be more directly involved in care. “A parent could assess vital signs with her child in the comfort of her arms,” Capraro says. In the hectic pediatric ED, where Capraro says vital signs aren’t taken as frequently as they should be, “it allows for more frequent monitoring.”

Capraro also is trying out Cellscope, an iPhone camera attachment and app, for otoscopies. “In pediatrics looking at ears is a basic bread-and-butter thing,” he says. But the standard otoscope is challenging for trainees. “We can’t agree on what we see or we’re not looking at the same thing,” he says. The Cellscope takes videos that can be shared with other clinicians as well as patients. “People are coming together through technology,” Capraro says. “A parent sees a video of an infected ear and says, ‘Now I know why she didn’t sleep.’”

I SEE YOU: The author’s fundus is a large, clear image on the screen of a digital retinal camera.

Elizabeth Prabhu, MD F’17, a pediatric emergency medicine fellow, has discovered that same utility with a digital retinal camera that she’s been testing. “Clinicians have varying funduscopic exam skills,” she says. Like the traditional otoscope, the ophthalmoscope found in every exam room can be tricky to use: the image is tiny, and sometimes clinicians don’t see the same things. The Horus Scope, which Prabhu got for her feasibility study in the Hasbro ED, records images that can be viewed on a screen, similar to that of a compact digital camera, and shared and saved in a medical record. “Families like to see the photos and it’s quick for attendings to use,” she says.

Duffy, who is overseeing Prabhu’s study, says her department quickly incorporated the new device into daily practice. “[Physicians] will identify a patient to take a picture for teaching purposes, or put it in the medical record, or get an image for another use,” Duffy says. “Twenty years ago, when I trained, there was very little tech. You had to rely on your clinical exam skills, your knowledge base, and your intuition.” Prabhu laughs, and Duffy continues: “We all have to be open to new techniques. … [But] it’s a tool that, as you become facile with the technology, it supplements your exam skills, it doesn’t replace them.”

Put it to the test

At up to $15,000 a pop, the Horus Scope won’t be in every exam room anytime soon. Money is only one of many factors that researchers consider when studying digital health tools. “We need to know when we are introducing new technology, is it valid? Is it feasible? What’s it cost? What’s the cost benefit? And does it lead to overtreatment?” Duffy says.

Roger Wu, MD, MBA RES’16, chief resident in emergency medicine, was a co-investigator on the Google Glass study at Rhode Island Hospital. “There’s often a lot of media hype around these new technologies … but you really need to let the dust settle and give it time to see what the real value is,” Wu says. His department stopped using Glass after the study ended, he says. “In order to successfully launch new technologies in health care, you need to keep in mind organizational culture and workflow.” Health care administrators should invest in new tech, he says, “but it’s a kind of cautious optimism. It’s important to invest with the understanding that there will be many challenges and obstacles.”

Capraro agrees. “We need to test if the performance matches the excitement factor,” he says. “We need skeptics. Does [the infrared camera]translate to clinical medicine? … It’s good we’re in a critical academic place to put this technology to task to find their limitations. There’s a lot to be learned. A lot of things fall apart as they get translated.”

Nearly everyone interviewed for this article had a story about flawed research and unethical claims: a device to help the elderly when they fall that was tested on college students; an app that supposedly could diagnose melanoma from a photo of a mole, but wasn’t tested clinically; another that scanned Twitter for signs of distress and, without users’ permission, emailed their contacts.

“Developers will develop an app for one illness only, or a fixed number of symptoms because it’s based on a book they read, instead of talking to patients,” Cyrena Gawuga ’03 AM’15 PhD’16 says. “They don’t have a concept of the user experience. They develop things the way they think things might go.”

A doctoral student in the molecular pharmacology, physiology, and biotechnology department, Gawuga came to Brown as a PLME but left the Medical School in her second year after struggling with chronic illness. She’s an avid digital health consumer, willing to try apps still in development to track her symptoms and moods, and an active participant in Twitter chats related to medical issues. “Physicians are not developers by and large, and patients are not developers by and large,” she says. “So by having groups of people work together on a project with different knowledge sets, we’ll get better apps and better tech.”

Rochelle Rosen has worked on several digital health studies to understand why and how participants use devices, texting programs, and other tools. “IT people aren’t necessarily users of tech developed for diabetes and heart disease,” she says. “Those patients are older; they’re not technology natives. The apps, the devices, the data must be relevant to their lives.”

And they have to work for the other end users: physicians. “Physician needs might include some consideration of what data are relevant, and what data are not,” Rosen says. “We want to make sure users have a voice not only in the development of apps but even the development of theories.”

“The people on the front lines, the physicians working with patients, they’re going to have the best understanding of what the problem is and what the obstacles to implementation are,” Wu says. He and Boyer say patients and clinicians must see an immediate benefit to any digital health tool for it to work. So, Boyer says, he often skips the usual research rigmarole and goes straight to real-world trials.

“If the interaction is off-putting in any way, they will never come back a second time to interact with your device. So I don’t do clinical trials,” Boyer says. “I’m interested in finding those features that make mobile health interventions deliverable for the rest of their lives.” He says he gauges a device’s effectiveness not by whether someone likes it, but whether they’ll use it again. “The best measure is leaving the technology with the patient [when a trial is over]and see if they continue to use it.”

Leveling the field

On social media and at conferences, Gawuga speaks passionately about the patient experience but seeks common ground with other constituencies. Doctors, patients, and developers can collaborate effectively only with mutual respect, which means breaking down traditional hierarchies. Social media is helping to do that, she says. “On Twitter everyone’s reaching out. Doctors are sharing information, patients are saying to doctors, ‘This is what’s important to me,’” she says.

Ranney, who is active on Twitter and has organized Twitter focus groups, arranged to meet Gawuga after a TweetChat to talk more in person about the patient perspective. She believes it’s up to patients, not physicians, to decide how much privacy they want. “It’s scary for a lot of doctors,” Ranney says. “It will level the playing field and make patients better consumers of their health care.”

Physician involvement in the development of digital health tools will better ensure that they adhere to ethical standards. Just because the technology is new doesn’t mean that time-honored commitments of doctoring, from confidentiality to the oath to “do no harm,” go out the window. Indeed, given the very real concerns about data security, it’s more important than ever that clinicians obtain patients’ consent, take steps to protect their health information, and honestly discuss privacy concerns.

This may be a greater worry for health care systems than for patients, however; in surveys, large majorities have said they want to link their medical records to non-HIPAA-compliant sites, including the data-sharing platform PatientsLikeMe and even their social media accounts. Patients who text or email with their physicians know their messages aren’t secure. “We need to be honest about the risks,” Ranney says. But we take risks every time we use a credit card, she adds. “It’s obnoxious of us to say, ‘No, you can’t have that.’”

“The reason [digital health]is coming is patients are driving it,” Boyer says. “I recognize that it might not work necessarily for my mom, but as people in my generation and yours and my son’s all get older, this will have increasing relevance for them, and that’s why we need it.”

See one, do one, teach one

That’s also why medical schools need to start incorporating digital health into the curriculum. Many devices have the potential to improve not only patient care but clinical training. “The medical education component of this is vast,” Duffy says. Large, clear, shareable images of the inner ear or the back of the eye benefit learners and teachers alike. Remote consults give residents instant access to specialized knowledge. Hardware and software that gather and analyze patient data help trainees see patterns and make medical decisions.

But most digital tools are so new that teachers are learning to use them alongside their students. And in a busy practice, who has time for that? “It’s going to be 15 to 20 years before digital health is ubiquitous in medicine,” Ranney says. She’s launching a preclinical elective in the fall, with the assistance of Margie Thorsen ’15 MD’19, that will include workshops where students can explore how tech can be used in medical settings, and develop their own ideas for a final project. The medical students who learn now how to use and develop digital health tools and, most importantly, how to adapt when something new comes along, will be leaders in the future.

Duffy isn’t ready to put her older colleagues out to pasture just yet. In every age group there are quick adapters and slow adapters, she says. The quick adapters will try out the latest tools, push buttons and play around and figure out how they work. The slow adapters wait and see. But if the technology works, she says, they come around. “When you realize the benefits to patients, you’ll keep up.”